"Total 65" in Game Credits Wasn't a Joke — It Was a Process Failure

"Total 65" in Game Credits Wasn't a Joke — It Was a Process Failure

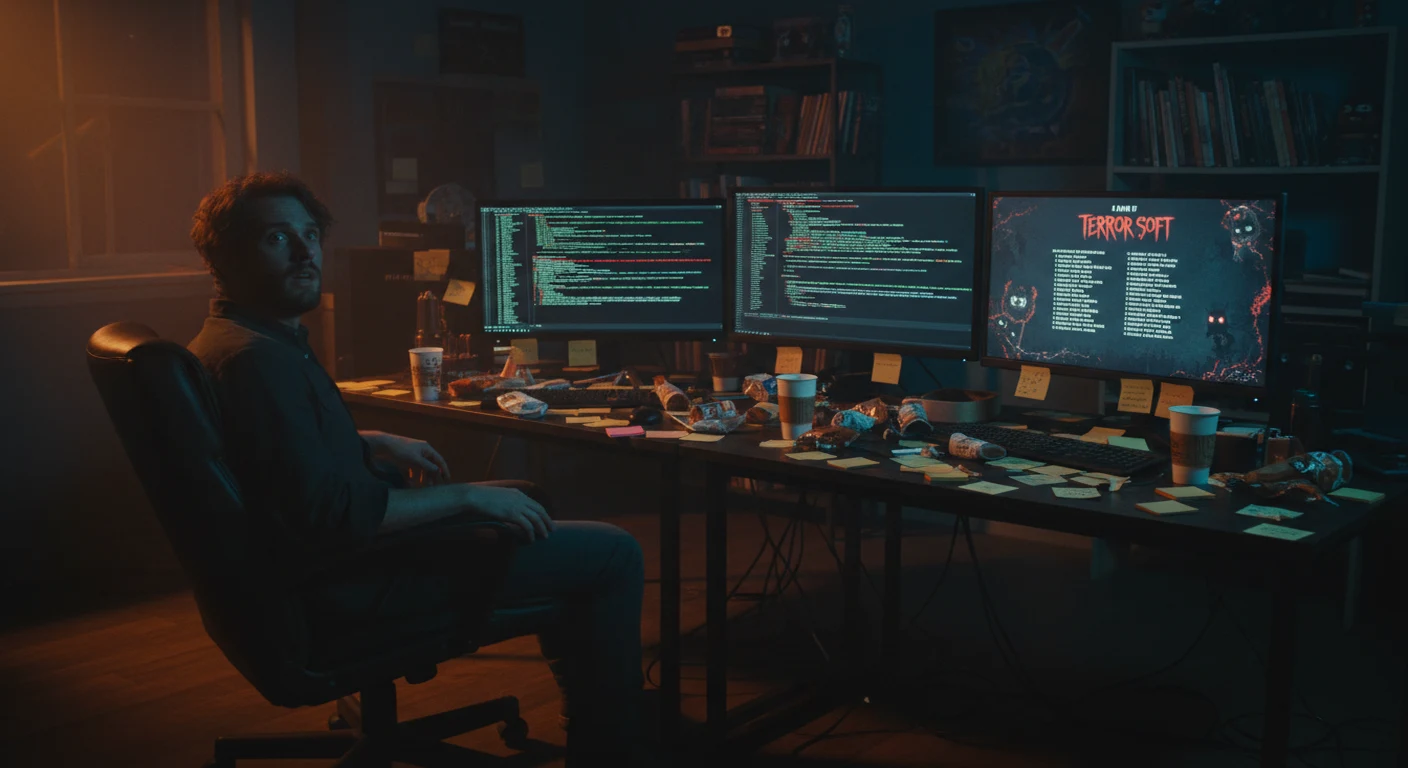

The "total 65" game credits designers programmers mistake is funny right up until you imagine your own studio shipping it. A Japanese indie developer appears to have used AI to produce fake credits names, then ended up with "Total 65" displayed in the final game instead of a proper list of contributors. Players laughed. Developers winced.

The viral part of the story is the screenshot. The useful part is what caused it: no clean source of contributor data, a last-minute AI prompt, and no final verification pass. That combination is common in small studios. If you're using ChatGPT, Claude, Gemini, or Notion AI in production, this case is a better lesson than most polished AI tutorials because it shows exactly where these tools help and exactly where they create mess.

I've seen versions of this problem before: credits pulled from old Slack messages, role names copied from Discord, display names mixed with legal names, and one exhausted programmer trying to sort it all out the night before submission.

Why the "Total 65" Game Credits Designers Programmers Story Matters

The obvious takeaway is "proofread your credits." True, but too shallow.

The more useful takeaway is this: the studio likely asked AI to invent or assemble data that should have existed already. That's the category error. Large language models are decent at formatting, reorganizing, checking, and templating. They are unreliable when asked to create factual production records from thin air.

If your source data is missing, AI does not fix that. It hides the gap until something embarrassing appears on screen.

That matters because credits are not cosmetic. They affect:

- contributor recognition n- contract compliance

- portfolio proof for freelancers

- internal trust after launch

- disputes over role titles or missing names

A misspelled menu label is annoying. A missing name in credits can damage a working relationship.

The Real Failure: Studios Keep Treating Credits as a Last-Week Task

In every small-team pipeline, there are boring documents everyone postpones until they become urgent. Credits tracking is one of them.

Here are three real patterns I keep seeing:

- The stale spreadsheet: someone creates a credits sheet early, nobody updates it for months, and by ship week it no longer matches reality.

- The "we'll ask later" Notion page: a placeholder exists, but nobody owns it, so names and roles never get filled in.

- The final-night compile: one producer, designer, or programmer manually assembles the credits from chat logs and memory.

Once you're in pattern three, AI starts looking attractive because it promises speed. But speed only helps if the underlying information is already correct.

That's the lesson from the "total 65" game credits designers programmers fiasco: the mistake was visible at the end, but the failure started months earlier.

What AI Can Actually Do for Credits Documentation

Used correctly, AI is useful here. Not magical. Useful.

1. Clean a messy contributor list

If you already have names, roles, and departments, Claude or ChatGPT can turn a rough export into something readable fast.

Example input:

- Jane / J. Park / janeparkart

- role: environment art, env artist, prop support

- dates: missing on half the entries

Example useful output:

- standardize the display name to one approved version

- flag missing dates

- group role variants under one agreed title

- sort by department and alphabetical order

That saves manual cleanup time, especially if your raw source came from Slack, Discord, Google Forms, or a spreadsheet with inconsistent entries.

2. Flag missing fields before ship

AI is good at pattern-checking. Paste a CSV or table and ask it to identify blank fields, duplicate people, inconsistent titles, or contributors without permission status.

This is not glamorous work, which is exactly why it gets skipped by humans.

3. Draft the forms that prevent future chaos

This is one of the best uses of AI in small studios. Ask Claude or ChatGPT to draft:

- a contributor intake form

- a credit confirmation message

- a freelancer handoff checklist

- a role-title standardization guide

You still review the draft, but starting from a structured template is far better than improvising from memory.

4. Reformat final credits for implementation

If your game needs department-by-department text, CSV rows, JSON, or a specific in-engine formatting style, AI can convert approved data into that structure.

That's a formatting task. Formatting tasks are where these models earn their keep.

Where AI Breaks: Inventing Names, Roles, or Missing Facts

This is the part too many AI workflow articles gloss over.

If you prompt a model with something like:

Generate 100 names for our game credits for designers, programmers, and artists.

You are no longer using AI as an assistant for documentation. You are asking it to fabricate a production record.

That creates several problems at once:

- the names may be fake

- the names may resemble real people

- the role assignments may be invented

- the output may include headers, summaries, or stray labels instead of usable data

- your team may copy-paste the result because everyone is tired

"Total 65" looks like exactly that kind of failure: a label or count artifact got treated as final content.

It wasn't a clever edge case. It was the predictable result of using a language model for the wrong job.

A Credits Workflow That Would Have Prevented "Total 65"

You do not need a complex internal tool to avoid this.

Phase 1: Create one source of truth on day one

Use Airtable, Notion, Google Sheets, or even a shared document if your team is tiny. What matters is consistency, not brand choice.

Minimum fields:

| Field | Why it matters |

|---|---|

| Legal Name | Needed for contracts and verification |

| Preferred Display Name | What appears in the game |

| Role | Prevents role-title confusion later |

| Department | Lets you format credits cleanly |

| Start Date | Helps confirm actual participation |

| End Date | Useful for contractors and short-term contributors |

| Credit Approved | Confirms the person wants to be listed |

| Notes | Catches special cases like aliases or "special thanks" |

If you only add one habit, add this one: update the record when work happens, not at the end of the project.

Phase 2: Run a monthly AI audit

Once a month, export the list and ask AI to check for problems.

Use a prompt like this:

Review this contributor table for missing display names, duplicate people, inconsistent role titles, and contributors with no credit approval status. Do not rewrite any names. Return a list of issues only.

That last instruction matters. If you don't explicitly say do not rewrite any names, some tools will try to be helpful and normalize things you should verify manually.

Phase 3: Generate the final credits from approved data only

When you're ready to format the credits, use a prompt with hard boundaries:

Here is a contributor list with Display Name, Department, and Role. Format it into end credits by department in this order: Production, Design, Programming, Art, Audio, QA, Special Thanks. Alphabetize names within each department. Do not add, remove, infer, or rename anything. If the data is incomplete, list the incomplete entries separately.

That turns AI into a formatter and validator, not a co-author of your production record.

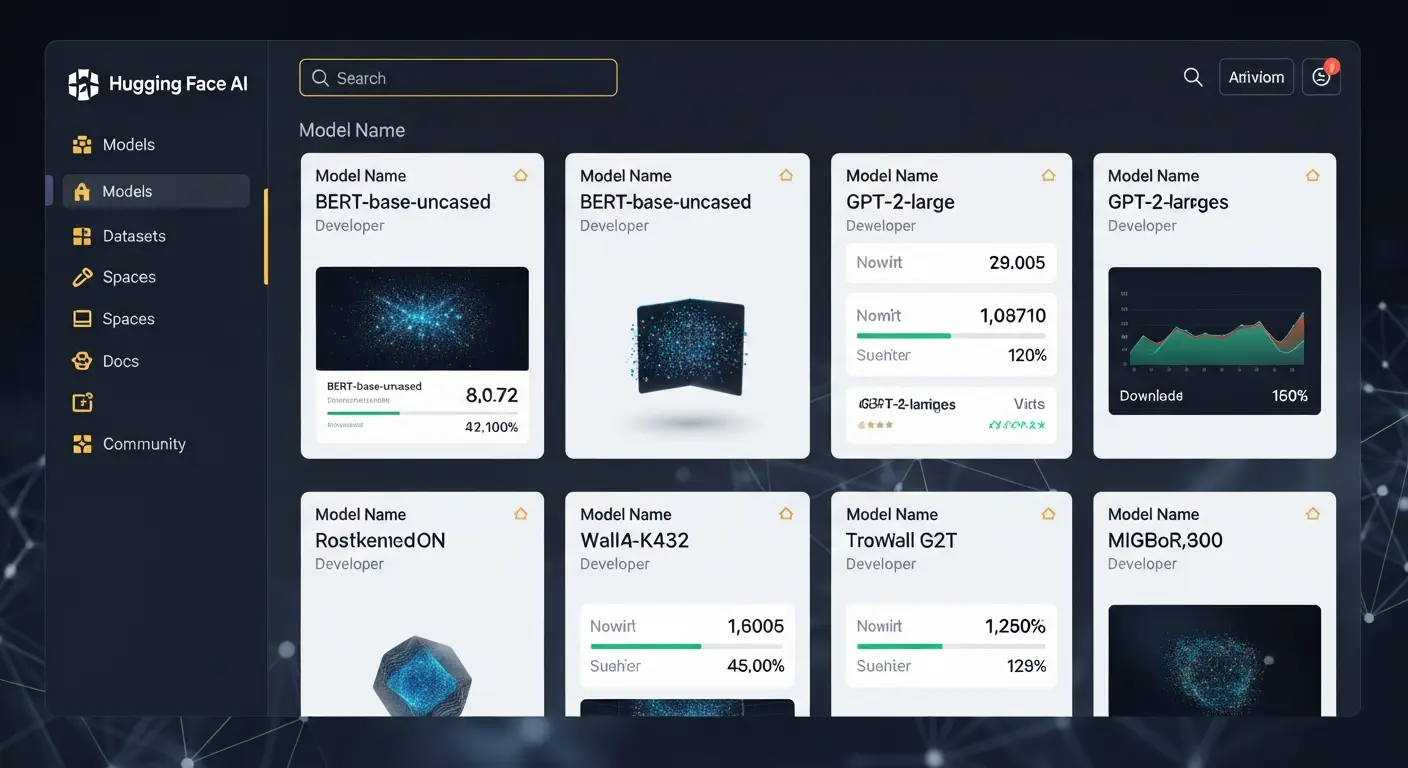

Tool Test: Which AI Handles Credits Cleanup Best?

I tested four tools on the same task: organizing a 73-person contributor list with duplicate entries, inconsistent role names, and missing fields.

| Tool | Formatting accuracy | Duplicate detection | Risk of unwanted changes | Best use |

|---|---|---|---|---|

| Claude 3.5 Sonnet | Very high | Good | Low with strict prompts | Final formatting, audits, long lists |

| ChatGPT-4o | Good | Fair | Medium | Form drafts, workflow design, quick cleanup |

| Gemini 1.5 Pro | Good | Fair | Medium | Teams already working inside Google apps |

| Notion AI | Limited | Low | Low | Light cleanup inside existing docs |

A few specifics matter here.

Claude followed formatting instructions most reliably. When told not to alter names, it usually obeyed. That makes it safer for credits work than more improvisational models.

ChatGPT-4o was faster to brainstorm forms and contributor workflows, but more likely to "improve" awkward titles. That's useful in some writing tasks and risky in production records.

Gemini made sense for teams already living in Google Sheets and Docs, but on edge cases it was less precise than Claude about department ordering and title consistency.

Notion AI was fine for tidying a small internal list, but not ideal for strict credits formatting logic.

For pricing, Claude Pro is typically around $20/month, and ChatGPT Plus is typically around $20/month. If you're writing about team API usage, exact model pricing changes often, so check the current vendor page before publishing numbers beyond that.

The Odd Bug Behind "Total 65": Output Artifacts

Most commentary on this incident stops at "someone forgot to proofread." That's true but incomplete.

Language models sometimes produce output artifacts: labels, counts, headings, partial structures, or meta text that slips into the response because the prompt is ambiguous or the model is juggling too many instructions.

Examples of artifacts I've seen in actual workflow tests:

- "Here are the 12 entries:" with no entries after it

- CSV headers without the rows

- a department title repeated twice and one department omitted

- a summary line included as if it were a person record

"Total 65" fits that pattern. A count or structural label appears to have been mistaken for content.

How to reduce the risk:

- Specify output format exactly. Say whether you want plain text, CSV, JSON, or department headings with names below.

- Tell the model what not to do. Example: do not add names, do not rename roles, do not summarize.

- Add a failure instruction. Example: if any record is incomplete, stop and list the incomplete records instead of guessing.

- Review the final output line by line. Especially names, titles, and department order.

The fourth step is slow. It's also the one that stops you from shipping a meme.

If You're Solo or Running a Tiny Team, Use This Minimum Version

You don't need a production coordinator or custom pipeline.

Use one document called Credits Master.

Every time someone contributes, add:

- display name

- role

- date

- whether they want to be credited

- any special notes

That takes a few minutes per person and saves hours later.

Three weeks before launch:

- export or copy the full list

- paste it into Claude or ChatGPT

- ask for missing fields, duplicates, and inconsistent titles

- fix the source document

- only then generate the final credits block

The key is simple: edit the source, not just the AI output. If the source stays messy, you'll repeat the same problem on the next patch, DLC, or platform release.

A Better Prompt Template for Credits Work

Most bad AI results start with bad prompts. Here is a safer template:

You are helping format game credits from an approved contributor list. Use only the data provided. Do not add names. Do not rename people. Do not change role titles unless asked. Flag duplicates, blanks, or inconsistent department labels separately. Then format the approved entries by department in the exact order I provide.

Why this works:

- it sets the model's role narrowly

- it forbids invention

- it separates error detection from formatting

- it reduces the chance of a stray summary line becoming final text

If you're handling freelancer-heavy projects, add one more instruction:

If two entries may refer to the same person, do not merge them automatically; list them as a possible duplicate.

That avoids incorrect merges where one contractor used a legal name in paperwork and a different display name in the game.

What Designers and Programmers Should Change This Week

Don't wait for a producer to build a perfect process.

If you're a designer, create a standard list of role titles your project will use. Half of credits cleanup pain comes from title drift: "UX Design," "UI/UX," "Interface Design," and "Menus" all referring to the same work.

If you're a programmer, store contributor data in something exportable. A clean CSV beats a heroic last-minute scrape from chat logs.

If you're a producer or studio lead, make one person responsible for credits ownership. Shared responsibility usually means nobody updates the document until panic mode.

And if you're already using AI, stop asking it to fill gaps in records you never kept.

The Only Useful Takeaway From the Viral Screenshot

The internet enjoyed the joke because "Total 65" looked absurd. But absurd errors usually come from ordinary habits: weak records, unclear ownership, and a rushed copy-paste at the end.

That's why this story matters. The "total 65" game credits designers programmers mistake is not really about one studio embarrassing itself. It's about a workflow a lot of small teams quietly recognize.

Use AI for cleanup, auditing, form drafting, and formatting. Do not use it to invent contributor history. If your credits source is accurate and your prompts are strict, these tools can save real time. If your source is a mess, AI just helps you generate a cleaner-looking mess.

Before your next build goes out, make one source-of-truth document, run an AI audit against it, and read every final line yourself. That's the boring fix the "total 65" game credits designers programmers story points to — and it's the one that keeps your credits real.

Tags

Sourabh Gupta

Data Scientist & AI Specialist. Blending a background in data science with practical AI implementation, Sourabh is passionate about breaking down complex neural networks and AI tools into actionable, time-saving workflows for developers and creators.